The "how did you hear about us?" question has been on intake forms and demo request pages for decades. Most companies collect the data and don't look at it seriously. It feels too unscientific, too subject to bad recall, too inconsistent to base decisions on.

But when you compare self-reported attribution data against tracked attribution data at scale - across thousands of deals - you start seeing patterns that tracked data alone systematically misses. The two methods don't just disagree on individual records. They disagree in consistent, predictable ways that reveal specific blind spots in your tracking infrastructure.

Here's what we found in a cross-analysis of 10,000 B2B closed-won deals.

The Setup

The dataset came from Attribify customers across seven B2B SaaS and professional services categories, all with average deal sizes between $8,000 and $85,000 and sales cycles between 21 and 120 days. Every deal had both a self-reported source (collected at demo booking or trial sign-up via a required free-text or dropdown field) and a tracked attribution source (UTM parameters captured at form submission, tied back through the attribution model to campaign-level data).

We compared first-touch sources: what did the buyer say got them to your site or into your funnel for the first time, versus what did the tracking data show as the first tracked touchpoint?

Where Tracked Attribution Gets It Wrong

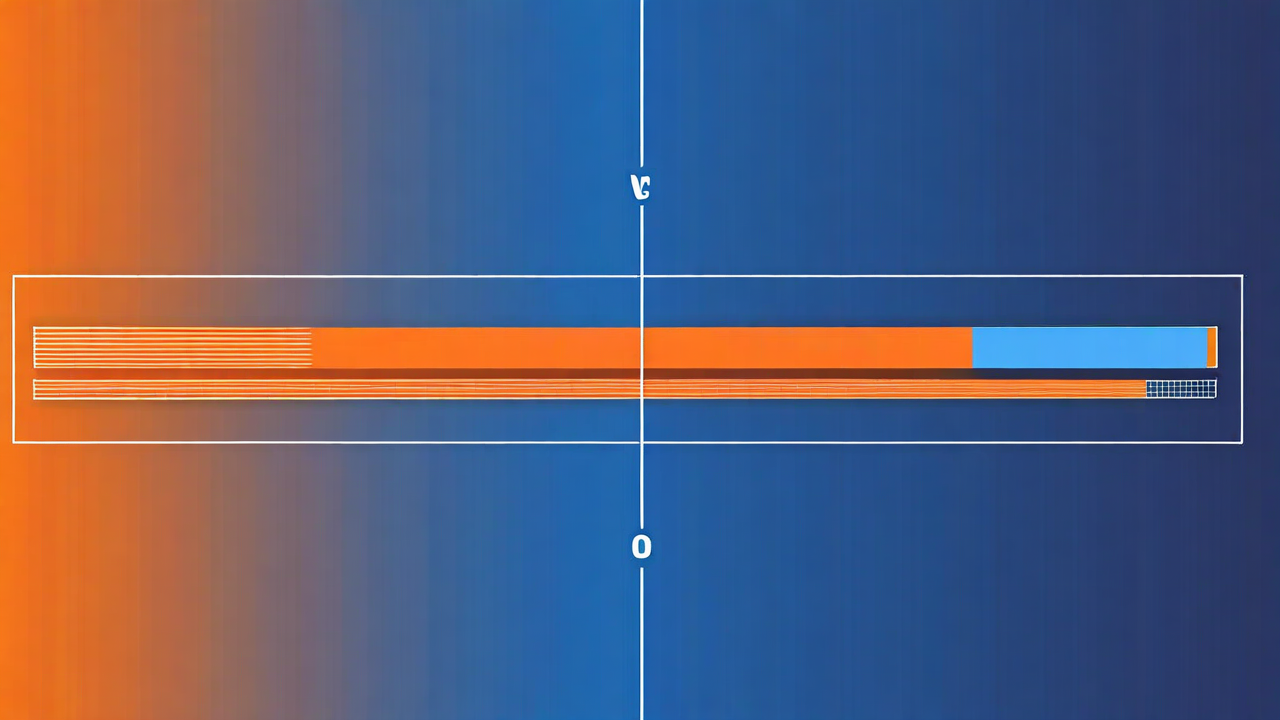

The patterns of disagreement were striking. In the 39% of deals where tracking and self-report disagreed, the distribution wasn't random. Specific channels were consistently over- or under-credited in tracked data versus self-reported data.

Word of mouth, LinkedIn, and media/events were dramatically undercounted in tracking. Paid search and email were overcounted. This is almost exactly the pattern you'd predict from first principles: tracking captures clicks, word of mouth doesn't generate clicks, LinkedIn often generates impressions not clicks, and podcasts can't be tracked at all.

Why Word of Mouth Is the Most Undercounted Channel

Word-of-mouth referrals account for 19% of first-touch sources in self-reported data, but only 4% in tracked attribution. The gap is 15 percentage points - the largest discrepancy in the dataset.

This makes structural sense. When someone tells a colleague about your product, that colleague doesn't click an ad. They probably type your company name directly into a search bar or navigate directly to your website. That visit shows up as "direct" in your analytics. If you're not capturing referrer names or asking about it explicitly, it vanishes.

The practical implication: customer success and retention work that generates referrals is probably more valuable than your tracked attribution data suggests. Companies that find 4% of their pipeline from referrals in tracking data and 19% in self-reported data have a significant line item that's invisible in their budget conversations.

What Self-Reported Data Gets Wrong

Self-reported attribution has its own systematic biases. Human memory tends to anchor on the most salient or most recent memorable touchpoint, not necessarily the first one. A buyer who saw a LinkedIn ad three months ago, read two blog posts, watched a webinar, and then got a personalized outbound email from a sales rep is likely to say they "heard about you from a sales rep" - because that was the direct trigger for taking action, and it's most recent in memory.

Buyers also tend to underreport ad exposure. Nobody wants to admit the retargeting ad got them. They'll say organic search or word of mouth because that narrative makes them feel less like they were "sold to."

Neither tracked nor self-reported attribution is right. Both are systematically biased in different and partially predictable directions. The value comes from running both and looking at where they diverge.

How to Use This in Practice

The most useful application is triangulation. When tracked and self-reported data agree, you can act with higher confidence. When they disagree significantly, you have a measurement gap worth investigating.

Run this analysis on your own closed-won deals quarterly. It takes about 30 minutes if your CRM has both data fields. Look for the channels that self-reported attribution consistently shows higher than tracked attribution - those are your blind spots and likely underinvested channels.

For the dataset in this analysis, the biggest budget implication was LinkedIn. Companies seeing 9% tracked attribution and 21% self-reported attribution from LinkedIn were systematically underspending on a channel their buyers said was how they first learned about them. That's a meaningful investment signal that last-click data was actively hiding.

Your numbers won't match ours exactly. But if you have enough closed-won deals to run the comparison, I'd bet the patterns look more similar than you'd expect. Start with 100 deals and see what you find.

Close the gap between what buyers say and what tracking shows

Attribify combines tracked multi-touch data with survey attribution fields so you can see both views in one place.

Start Free Trial